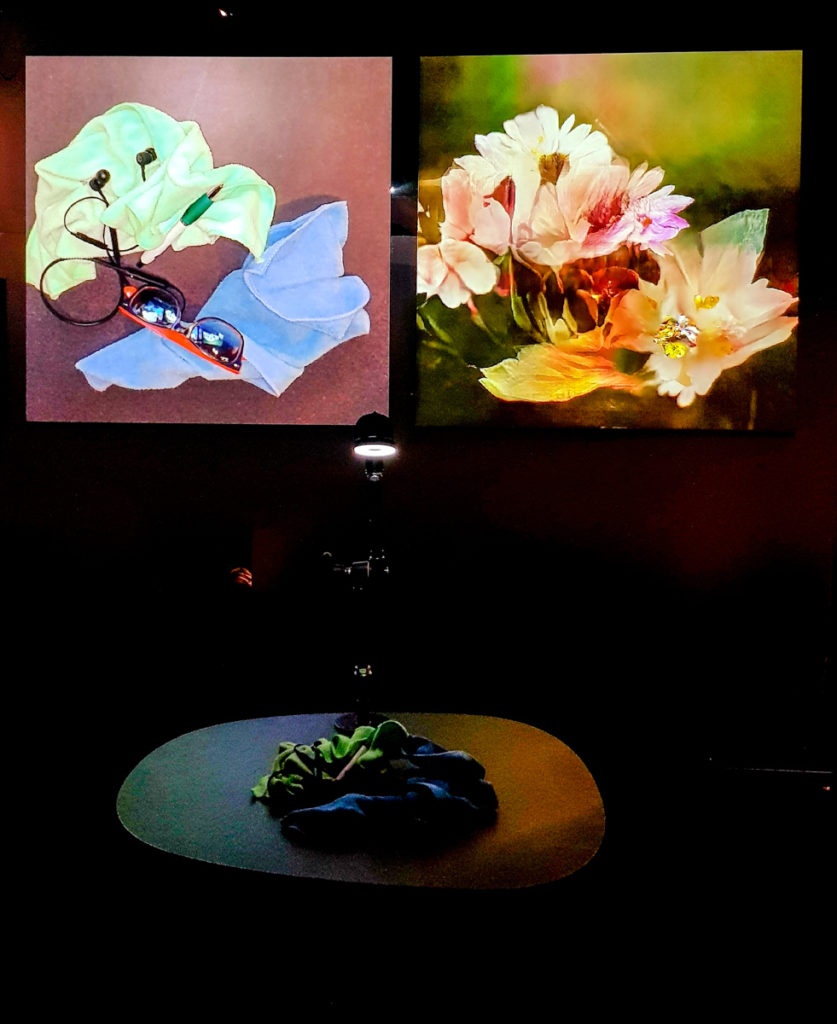

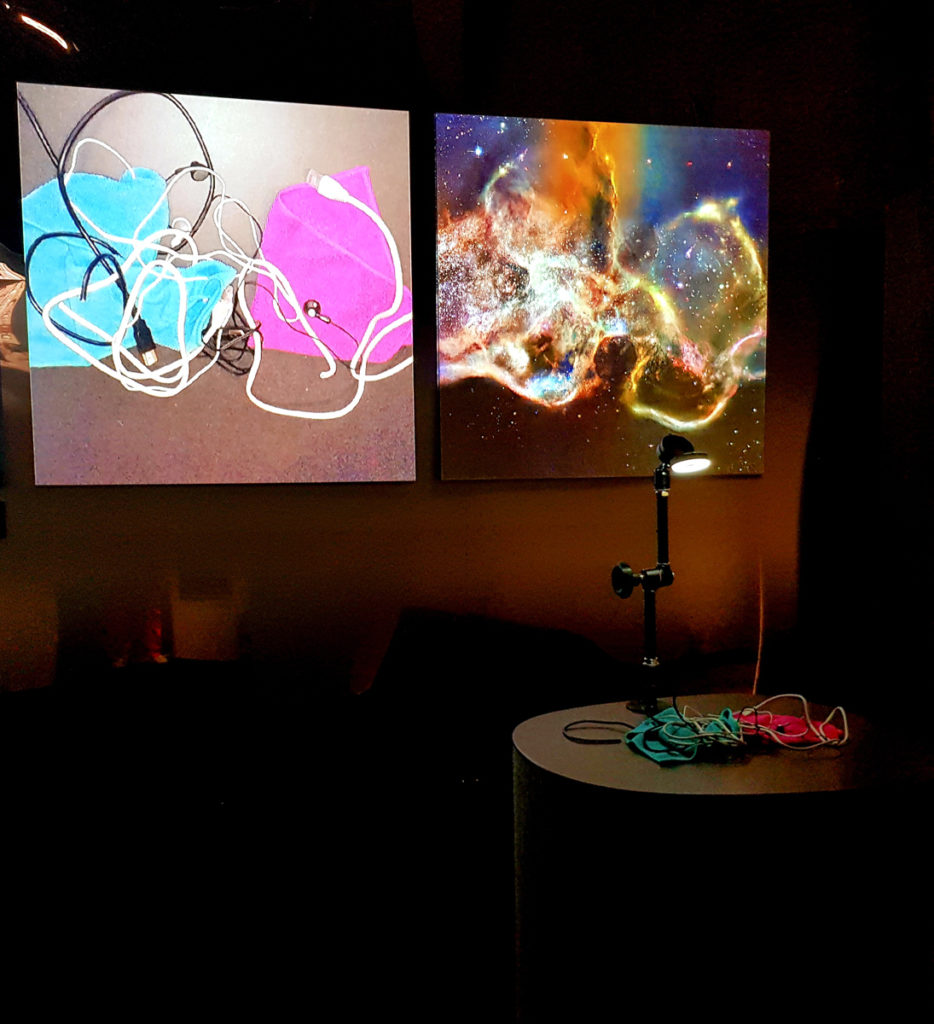

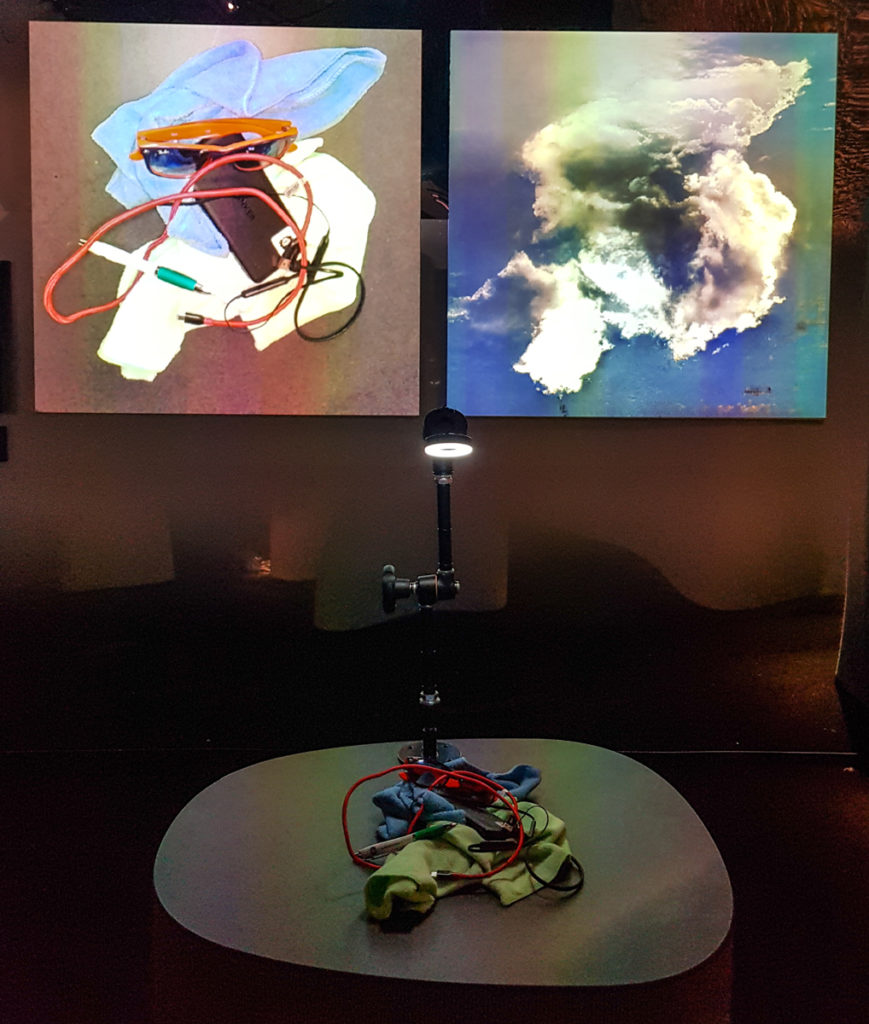

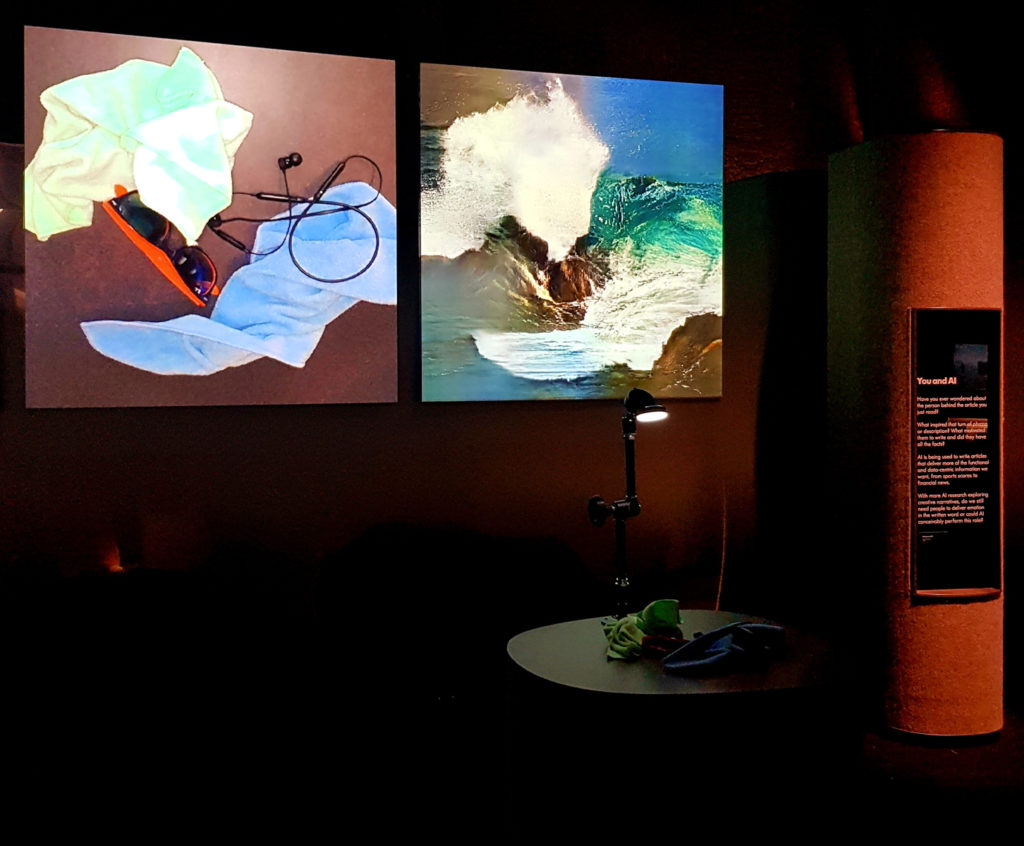

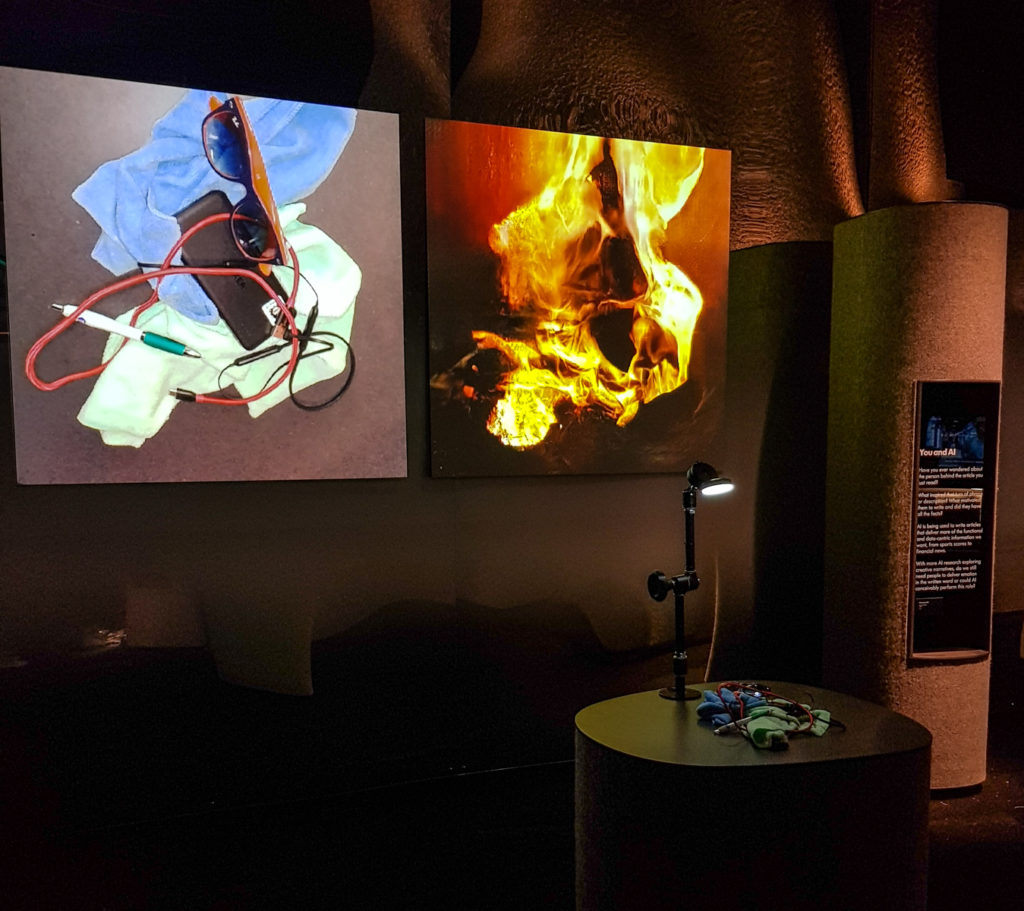

2017. Interactive installation. Custom software.

Materials: Custom software, PC, camera, projection, cables, cloth, wires.

Technique: Custom software, Artificial Intelligence, Machine Learning, Deep Learning, Generative Adversarial Networks.

Editions: 3+2AP

Collections: M+ Museum of Art

Part of the Learning to See series. For more information, please see:

- Project page for the full series

- This 20min presentation: 2019 – Part 3: “We see things not as they are, but as we are”.

- A paper on this work I presented at SIGGraph 2019

- For a much deeper conceptual and technical analysis, please see Chapter 5 of my PhD Thesis.

This particular edition is an interactive installation in which a number of neural networks analyse a live camera feed pointing at a table covered in everyday objects. Through a very tactile, hands-on experience, the audience can manipulate the objects on the table with their hands, and see corresponding scenery emerging on the display, in realtime, reinterpreted by the neural networks. Every 30 seconds the scene changes between different networks trained on five different datasets: (the four natural elements:) ocean & waves (representing ‘water’), clouds & sky (representing ‘air’), fire, flowers (representing earth, and life); and images from the Hubble Space telescope (representing the universe, cosmos, quintessence, aether, the void, the home of God). The interaction can be a very short, quick, playful experience. Or the audience can spend hours, meticulously crafting their perfect nebula, or shaping their favourite waves, or arranging a beautiful bouquet.